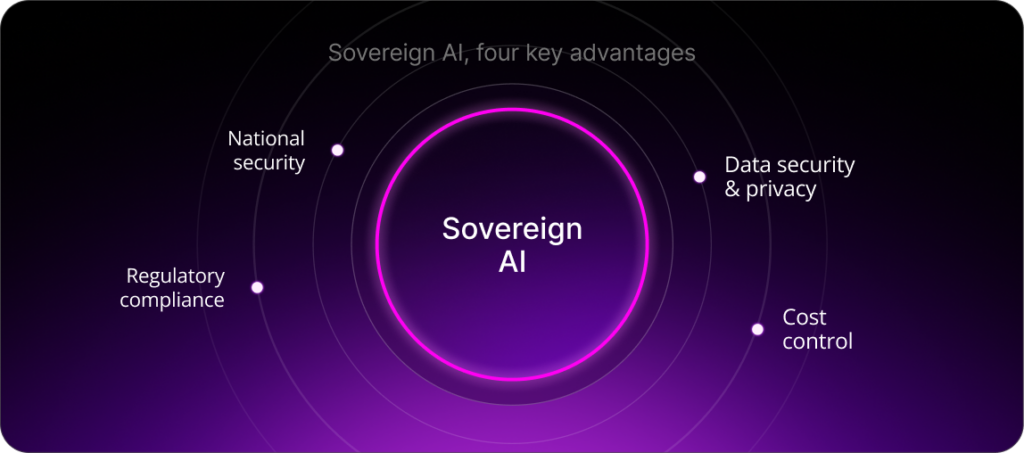

- Sovereign AI is gaining traction across industries and governments, giving them greater control over AI models, data, and computer resources.

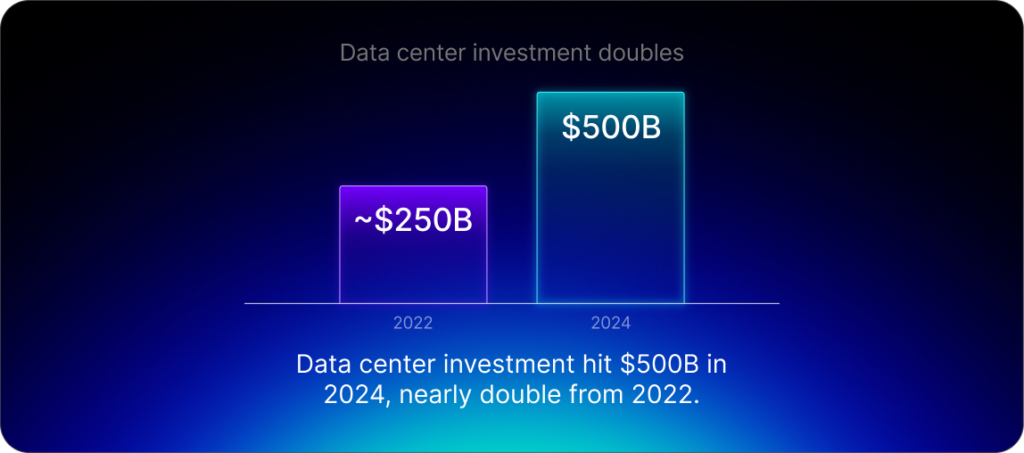

- Global investment in data centers reached $500 billion in 2024.

- The carbon intensity of a data center’s operations depends heavily on the energy mix of its local grid.

Artificial intelligence has crossed a threshold. It is no longer just a feature embedded in a product or a category of software to be evaluated and adopted. It is becoming systemic infrastructure, underpinning a vast range of uses across industries. Like traditional infrastructure, such as transport networks, AI depends on the large-scale, reliable, and sustainable supply of energy to its underyling GPUs. The choices organizations make about where and how their AI infrastructure operates will shape their efficiency, resilience, and competitive position for years to come.

Global investment in data centers nearly doubled from 2022 to reach $500 billion in 2024, according to the International Energy Agency’s (IEA) 2025 Energy and AI report. Data center electricity consumption stood at about 415 terawatt-hours (TWh) in 2024, representing 1.5% of total global electricity use. By 2030, the IEA projects that figure will more than double to about 945 TWh – slightly more than Japan’s entire electricity consumption today.

Electricity grids in many regions are already struggling to keep pace. The IEA report estimates that about 20% of planned data center projects could face delays unless grid constraints are addressed. At the same time, AI can be a powerful tool for optimizing energy systems, making it potentially part of the solution.

The cloud is a physical place

The term “cloud computing” can obscure the reality of how AI is powered: every AI workload runs on physical hardware, in a facility, connected to a specific electricity grid.

As such, not all cloud is created equal. Choosing a cloud-based AI solution does not necessarily mean it will be more efficient or environmentally responsible than other options. Each center’s resource demands have direct implications for the surrounding communities and environments. Those extend beyond direct electricity consumption to water usage (used for cooling), hardware manufacturing, and the local ecosystem.

The carbon intensity of a data center’s operations depends heavily on the energy mix of its local grid. In regions where electricity generation relies primarily on coal or natural gas, the same AI workload will have a higher emissions profile than in regions powered by renewables. The IEA reports that coal accounts for approximately 30% of global electricity generation for data centers, although this varies widely depending on country and region.

None of this is an argument against cloud computing. Cloud infrastructure offers scale, flexibility, and cost advantages that are well established. Rather, it means that organizations need to make AI infrastructure decisions based on an analysis of real costs and the associated implications, instead of choosing a particular solution by default.

Where sovereign AI meets operational efficiency

Across industries and governments, the concept of sovereign AI is gaining traction. It refers to infrastructure deployments that give organizations a high degree of control over their AI models, data, computer resources and AI deployment (SAAS, private cloud or On-premise data centres). This can confer advantages ranging from national security, cost control to regulatory compliance to data security and privacy.

AI sovereignty has a physical dimension that is often overlooked. On-premises, or on-prem, deployment – where an organization’s AI stack is located directly in its own data centers rather than relying on third-party providers – is fundamental to AI sovereignty.

The greater control allowed by on-prem deployment can sometimes make it a more efficient choice. Running on-prem GPUs at consistently high utilization means more processing power is used for actual work rather than being wasted on idle hardware, reducing environmental costs. Local processing also avoids the energy cost of sending large datasets to the cloud.

On-prem setups also give organizations more control and choice over their energy sources. A renewable source like wind or solar will result in a significantly lower carbon footprint than a cloud-based data center powered by coal. Extending the life of existing hardware avoids the high environmental costs of purchasing new equipment.

A peer-reviewed study published in Nature Sustainability in November 2025 found that combining smart facility siting, grid decarbonization, and efficiency improvements in the US could reduce carbon emissions by approximately 73% and water consumption by 86% compared to worst-case scenarios. Location can have a powerful impact. The study found that locating AI servers in states that have lower water scarcity and greater access to renewable power sources could reduce carbon emissions by 49% and the water footprint by 52% in best-case scenarios.

As Cornell University researchers who participated in the study noted, there’s no “silver bullet.” All of the elements need to work together to drive the reductions in carbon and water usage.

This study modeled US AI server infrastructure broadly, not on-prem versus cloud deployments specifically. But the factors it identifies – location, energy source, and operational efficiency – align with those that can be more closely controlled with sovereign, on-prem infrastructure.

The point is not that one type of AI deployment model is always superior; it’s that choices around AI infrastructure should be informed by real-world operational performance rather than default assumptions.

The hardware landscape is changing

The energy constraints described above assume today’s hardware – GPUs, silicon, and conventional data centers. But those constraints aren’t fixed. Hardware itself is evolving as new technologies emerge that could make the infrastructure and energy question look very different in the coming decade.

Neuromorphic computing is an approach to chip design that mimics the brain’s neural and synaptic structures. It represents a fundamental departure from conventional processing and could yield significant efficiency gains. Whereas conventional GPU systems draw power continuously regardless of workload, in neuromorphic systems, only the segment of the network that is actively computing consumes power.

In October 2023, IBM reported that its latest version of a neuromorphic chip, NorthPole, outperformed conventional computing architectures in certain tasks and at a fraction of the energy cost.

Another growing field known as Organoid Intelligence, a type of biocomputing, involves the use of living biological matter. Scientific American reported in 2024 that Swiss company FinalSpark had built a platform powered by lab-grown human brain cell clusters that university researchers are using for computation experiments. Through changing the physics of computation, this approach could lead to dramatically lower energy consumption.

These emerging solutions are still in the proof-of-concept stage and don’t solve today’s problems. But they signal that the current paradigm of energy-intensive, GPU-driven computing is not permanent. Organizations that start thinking carefully about where their AI runs and what powers it will be better placed – both for today’s AI infrastructure landscape and the one that is emerging.

For more expert content on industry outlooks and innovation, subscribe to our newsletter or visit our Insights page.

Q&A: Five Key Questions on Sovereign AI

According to the International Energy Agency, data center energy consumption reached 415 terawatt-hours in 2024, accounting for about 1.5% of total global electricity use. By 2030, that figure is expected to climb to about 945 TWh – slightly more than Japan’s entire electricity consumption today.