There is no question that artificial intelligence has rapidly transformed the banking and financial services sector, reshaping a range of core functions including fraud detection, faster credit decisions, enhanced risk management and regulatory compliance. Nearly half of respondents to a 2025 PwC study expect the technology to improve their cost savings by at least 10%, while 84% of financial entities believe that failing to adopt AI and digitalisation within the next five years will negatively impact their business models. Research by McKinsey also projects that generative AI could add $200 billion to $340 billion in value annually to the global banking sector, primarily driven by increased productivity.

However, as AI for finance becomes more complex, a critical gap is emerging: trust. Many of today’s most powerful models operate as “black boxes”, producing outcomes that cannot be easily analysed and understood by the business and end users, according to Hani Hagras, Chief Science Officer and Global Head of Artificial Intelligence at SBS. And in a heavily regulated industry where decisions directly impact people’s livelihoods, such opacity, or lack of AI explainability, is no longer acceptable, Hagras adds.

As global regulators increase efforts to oversee AI in finance, from the EU’s AI Act to UK supervisory guidance, explainability is becoming a defining requirement for responsible AI. The question is no longer whether banks should adopt AI, but whether they can do so in a way that remains transparent, defensible and worthy of trust, Hagras says during a discussion with Andrew Steadman, Chief Product Officer at SBS at the Adopt AI summit in Paris.

Why is explainability in AI critical in finance?

Explainability means being able to clearly understand how the AI model operates and how it can make specific decision. Today, AI can price a loan in seconds and detect fraud in milliseconds – it’s doing things faster and more accurately than any human team ever could.

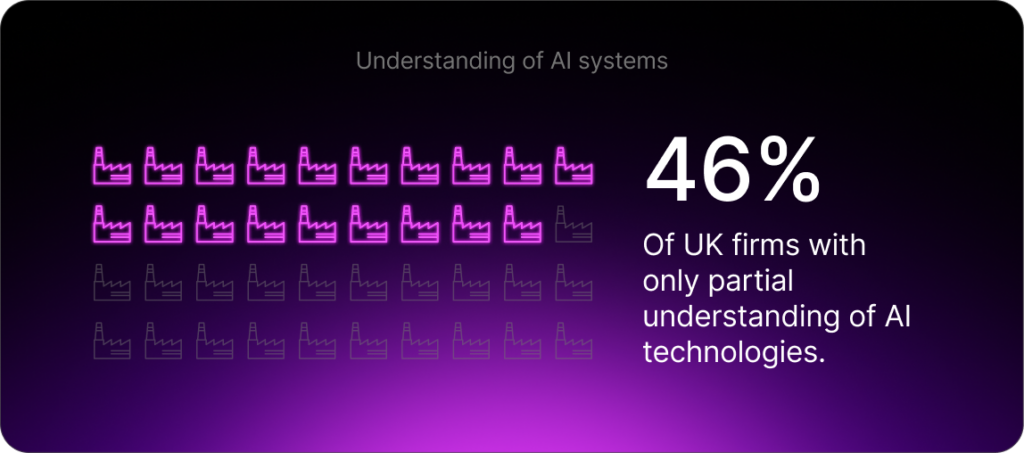

But here’s the catch, if you ask most executives how AI actually works, they will shrug. This is reflected in a 2024 report co-published by the UK’s Financial Conduct Authority and Bank of England, which found that just 46% of firms have a “partial understanding” of the AI technologies they use versus 34% that have a “complete understanding.”

Meanwhile, the EU’s AI Act describes explainability as a “fundamental obligation” for any system that impacts credit, insurance, or livelihoods. The UK House of Lords AI Select Committee says it is unacceptable for an AI that materially impacts a person’s life to operate without a “satisfactory explanation.”

Why is explainability in AI a challenge in the financial services industry?

AI in finance has become both incredibly powerful and dangerously obscure. The same complexity that makes it accurate also makes it uninterpretable. In finance, that is unacceptable. These AI models decide who gets a loan, how markets move and how capital flows. If we can’t explain those decisions, we lose more than compliance, we lose trust.

The Bank of England’s Financial Policy Committee’s view on the risks is clear: “While the distinct features of AI are the source of its unique benefits, they can also be additional sources of risk,” it says.

“For example, the complexity of some AI models – coupled with their ability to change dynamically – poses new challenges around the predictability, explainability and transparency of model outputs.”

We’ve already seen issues arise from AI models making discriminatory credit decisions. Perhaps one of the best-known examples is the Apple Card, which was investigated by New York’s Department of Financial Services in 2019. On paper, the algorithm behind the card worked and was highly accurate, fast, and scalable. But in practice, it started giving women lower credit limits than men, even when their financial profiles were identical.

Apple called it a technical glitch. Regulators called it discrimination. But the truth is that it was neither, it was a system no one could easily explain or augment with human insights and governance. And that is the point: explainability is no longer an academic ideal; rather, it is survival. For banks, regulators and every customer who deserves to know why a machine made a decision that shapes their life.

Does explainability mean we are trading intelligence for clarity?

That is a myth. Historically, explainable systems were simpler and banks used logistic regression. It was easy to audit, but its accuracy was limited because of its simple model and the small number of features a logistic regression model employed to remain explainable. Then came deep learning, with thousands of features, providing very high accuracies but impossible to read, analyse or augment with human knowledge. Everybody assumed you couldn’t have both.

However, recent work found that explainable models are now close to the accuracy of black-box systems in many domains including financial applications. So that old excuse that you need a mysterious, over-complicated model to get better results isn’t true anymore.

You can achieve the same precision while maintaining full transparency, auditability and regulatory peace of mind. By employing explainable AI, we are embedding reasoning structures directly into models, so transparency no longer comes at the cost of performance. This means that explainability now means disciplined intelligence to the point where AI thinks like a scientist and speaks like a banker.

How should banking CTOs or chief risk officers approach explainability?

For predictive AI (which makes predictions affecting people lives), there are two roads:

- Post-hoc explainability: You build a black box model, then use tools like SHAP or LIME to rationalize its output. However, starting with black box models which are data driven (where the model will be as good as the underlying data) means that we cannot augment the model with any human knowledge to cover for data gaps, bias, etc. So, this will look like reading smoke signals after the fire.

- Explainable-by-design: This is where transparency is baked in from day one. Models use rule-based, linguistic hybrid architectures. These combine data-driven knowledge, or patterns learned from data, and expert-driven knowledge, such as the intuition of human bankers.

These systems think in pros and cons. For example: “Your score is 600 because your debt ratio is high, but your repayment history is strong.”

For Generative AI, explainability needs to be embedded in the output of such systems to explain in lay-person language the different steps and analysis they take to generate a given output. It is plain language and traceable logic, turning AI into a colleague instead of a mystery.

The data reinforces this. According to PwC’s 2025 Responsible AI Survey, nearly 60% of respondents say that responsible AI initiatives improve return on investment and organizational efficiency, while 55% say it enhances both customer experience and innovation. Whenever there is a major shift in technology, there are winners and losers. And if you want to be in the winning group, you have to understand one thing: AI in finance will only scale where it can be trusted.

But all of that potential hangs on a single word: responsibility. Responsible means being auditable, fair and explainable. Without explainability, you get bias, investigations and customer backlash. With it, you earn trust, adoption and competitive advantage. Finance is still a human industry and confidence is the real currency.

For more expert content on industry outlooks and innovation, subscribe to our newsletter or visit our Insights page.

Get in touch with our experts today.

Questions and Answers

What is explainability in AI? + –

Explainability in AI means clearly understanding how the AI model works and articulating how it makes specific decisions. In financial services, this involves providing transparent, traceable logic for decisions about loans, credit scores, fraud detection, and other critical financial determinations.

How does the EU AI Act affect explainability requirements? + –

The EU AI Act defines explainability as a fundamental obligation for AI systems that affect credit, insurance, or individuals’ livelihoods. Financial institutions must be able to document, audit and explain how AI models work, including their training data, logic and decision-making processes.

What role does explainability play in responsible AI? + –

Explainability is a core pillar of responsible AI, alongside fairness, auditability and accountability. Without explainability, institutions cannot prove that their AI systems behave ethically or lawfully. With it, AI becomes defensible, trustworthy and aligned with human values.

Is explainability only a compliance issue? + –

No. While explainability is a regulatory requirement, it is also a strategic advantage. Transparent AI systems are easier to scale, safer to deploy and more resilient to regulatory change. Explainability enables responsible innovation rather than slowing it down. At the end of the day, humans trust what they can understand.